Blog & Demos

Tutorials, case studies, benchmarks, and open-source demos — everything you need to build with small language models.

Teaching Small Language Models New Skills - Training a Local Cybersecurity Agent

How distil labs partnered with Octodet to train a small language model that outperforms LLMs 30x its size at analyzing cybersecurity logs, while running entirely on-premises to meet strict privacy requirements.

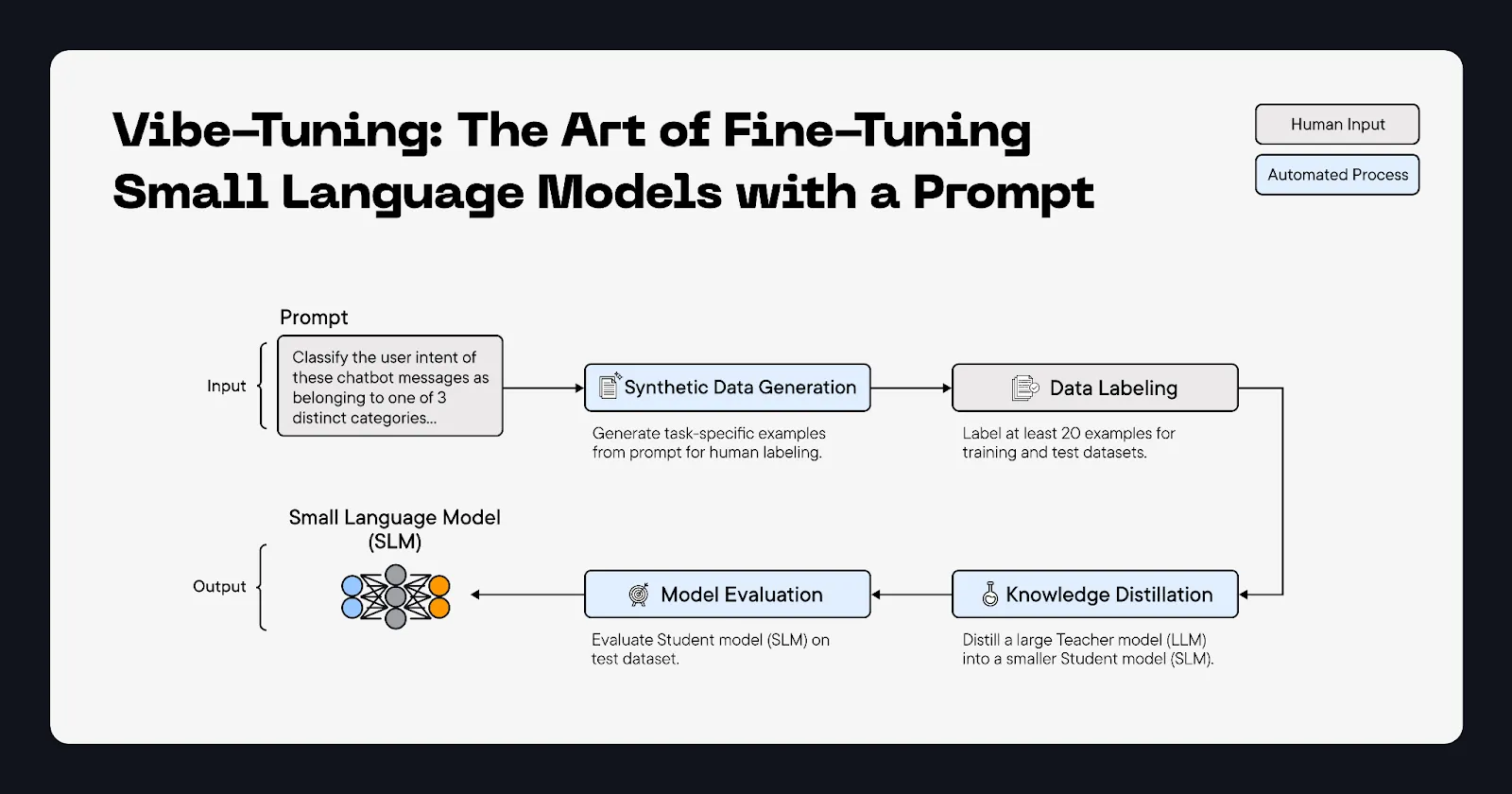

Vibe-Tuning: The Art of Fine-Tuning Small Language Models with a Prompt

Fine-tuning is a pain – you need datasets, ML expertise, and a stack of GPUs just to get started. Not anymore. With model vibe-tuning, you go from prompt to production-ready model without these headaches. This blog post shows you exactly how to build one, starting with just a prompt.

AI Slop Detector: Catch AI-generated text with a 270M model that runs in your browser

A fine-tuned 270M parameter model that detects AI-generated text entirely in your browser — no API keys, no cloud, no data leakage. Matches 120B teacher accuracy at 400x smaller size.

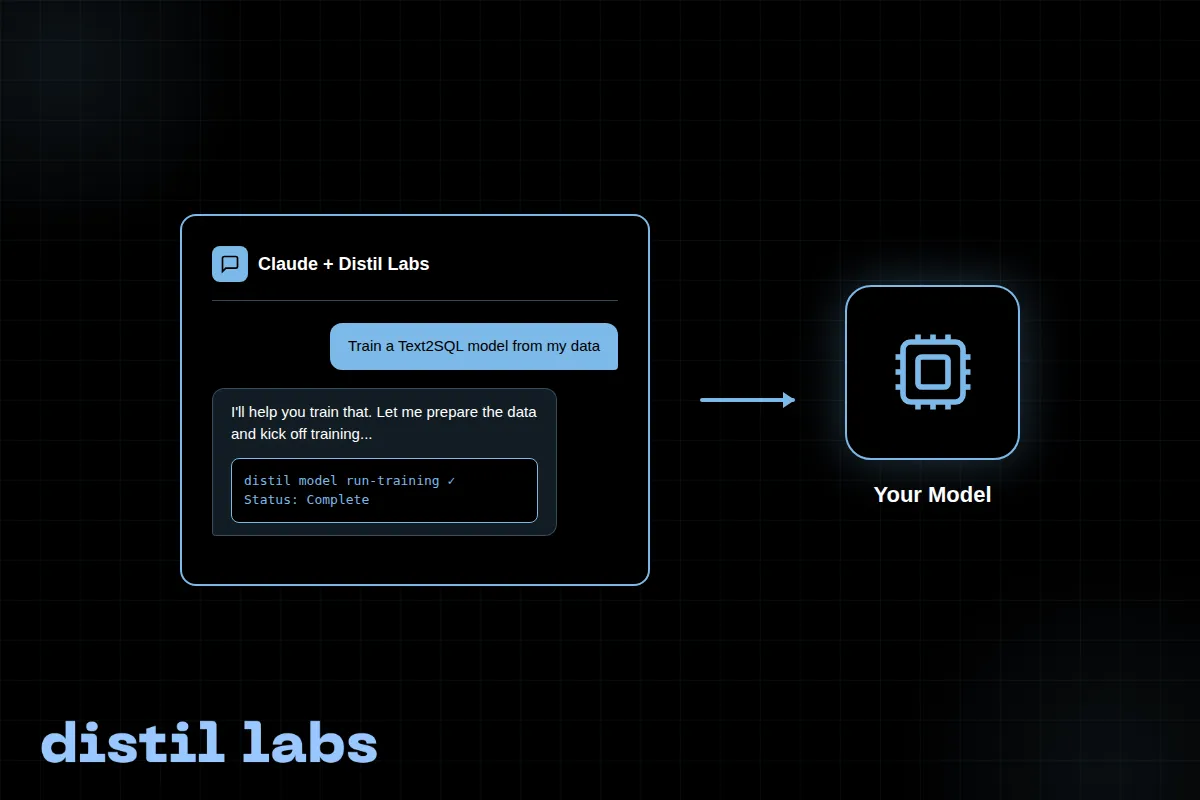

Train Your SLM with the distil labs Claude Skill

A step-by-step walkthrough of training a Text2SQL small language model using the distil labs Claude Code skill, going from raw conversation data to a working local model in a single conversation.

Text2SQL: Natural Language CSV Query Tool

Query your CSV data using natural language questions. A fine-tuned 0.6B parameter model converts questions into SQL queries and executes them locally — no cloud required.

SHELLper: Multi-turn Bash Function Calling Model

A fine-tuned 0.6B model that converts natural language into bash commands with 100% multi-turn tool call accuracy. Runs locally with full privacy.

Text2SQirreL 🐿️: Query your data in plain English

A fine-tuned small language model that converts plain English questions into executable SQL queries. Runs locally with no API keys, no cloud dependencies, and full privacy — matching the accuracy of a 685B teacher model.

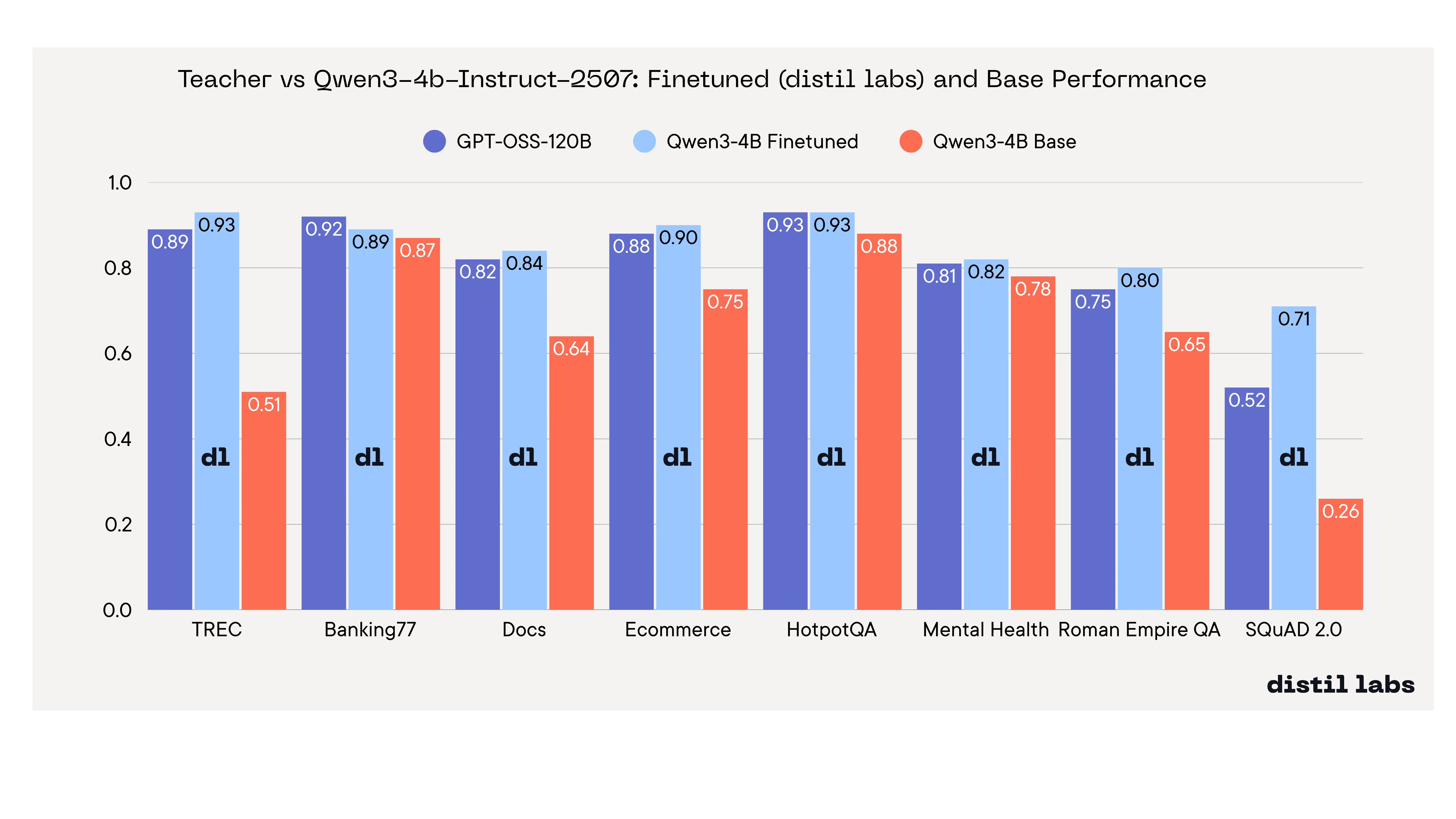

We Benchmarked 12 Small Language Models Across 8 Tasks to Find the Best Base Model for Fine-Tuning

A systematic benchmark of 12 small language models across 8 tasks reveals Qwen3-4B as the best for fine-tuning, with fine-tuned models matching or exceeding a 120B+ teacher. Smaller models like Llama-3.2-1B show the highest tunability.

Gitara: How we trained a 3B Function-Calling Git Agent for Local Use

We fine-tuned a small, tool-calling language model to turn plain-English questions into git commands with the accuracy of a cloud LLM.